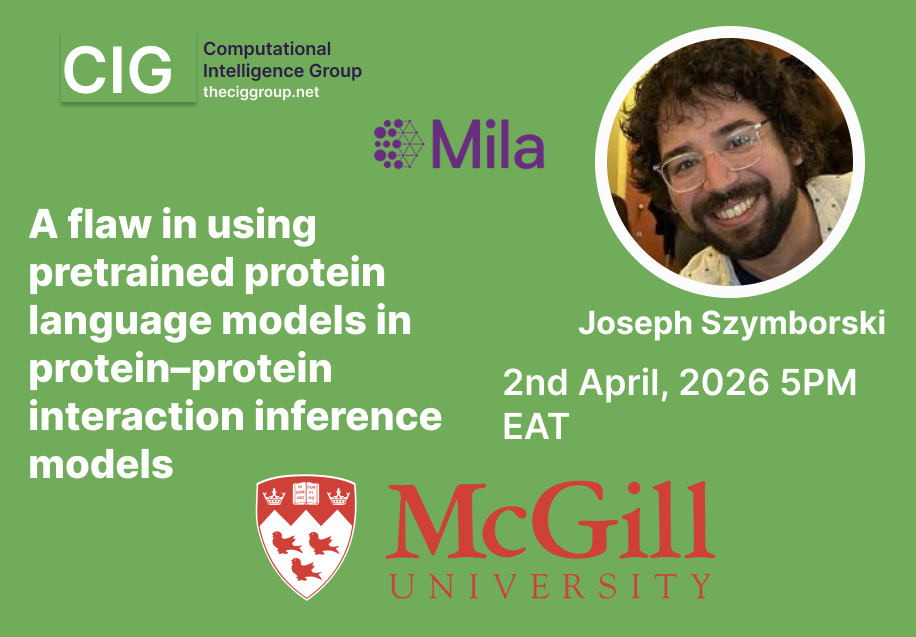

A flaw in using pretrained protein language models in protein–protein interaction inference models

Paper Author

Presented by

Abstract

With the growing pervasiveness of pretrained protein language models (pLMs), pLM-based methods are increasingly being put forward for the protein–protein interaction (PPI) inference task. Here we identify and confirm that existing pretrained pLMs are a source of data leakage for the downstream PPI task. We characterize the extent of the data leakage problem by training and comparing small and efficient pLMs on a dataset that controls for data leakage (strict) with one that does not (non-strict). Although data leakage from pretrained pLMs cause a measurable inflation of testing scores, we find that this does not necessarily extend to other, non-paired biological tasks such as protein keyword annotation. Further, we find no connection between the context lengths of pLMs and the performance of pLM-based PPI inference methods on proteins with sequence lengths that surpass it. Furthermore, we show that pLM-based and non-pLM-based models fail to generalize in tasks such as prediction of the human-SARS-CoV-2 PPIs or the effect of point mutations on binding affinities. This study demonstrates the importance of extending existing protocols for the evaluation of pLM-based models applied to paired biological datasets and identifies areas of weakness of current pLM models.